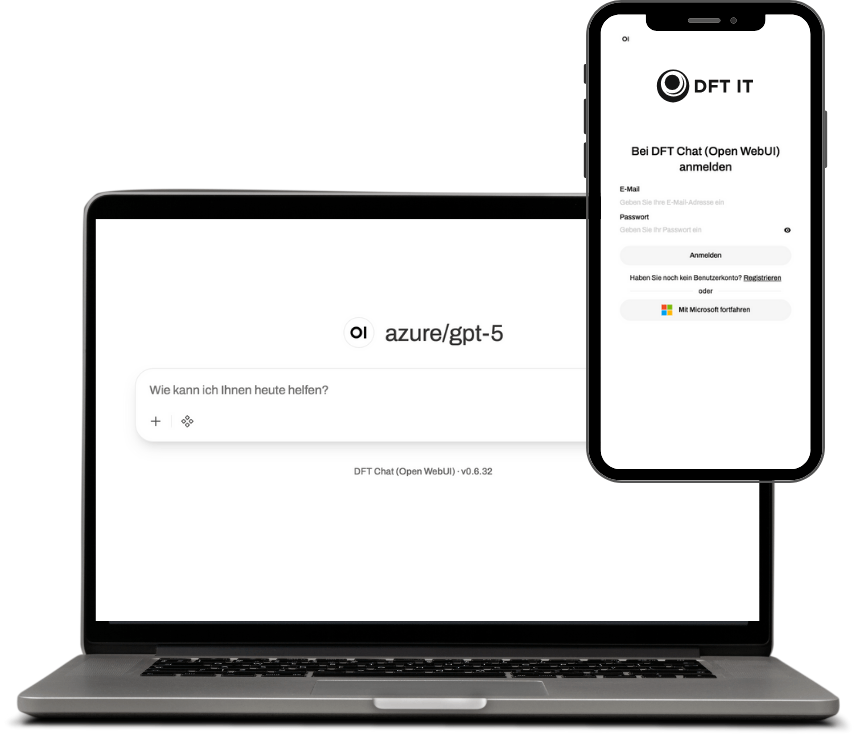

Generative AI – Your Own Chat Platform

Whether secure in the Azure Cloud, hybrid, or fully local on your own servers—we bring Generative AI to your company with full control.

- Full data sovereignty & GDPR compliance

- Your branding, Microsoft SSO & roles

- Best models—cloud, hybrid or fully on‑premise

Three Deployment Scenarios

From cloud operations to a fully isolated on‑premise LLM—we deliver the right architecture.

Azure Cloud

Managed or Own & Control

Operated in your Azure environment with Azure OpenAI, GDPR‑compliant in EU regions and seamlessly integrated with Microsoft 365.

- GPT‑4o, GPT‑5 and more Azure models

- Single Sign‑On via Microsoft Entra ID

- EU data processing contracts

Hybrid Setup

Cloud + local models combined

Sensitive workloads run locally, compute‑intensive requests optionally in the cloud. Routing via LiteLLM—flexible and controllable.

- Automatic routing based on data classification

- Combines Azure OpenAI and local LLMs

- Fine‑grained policies per team or use case

Fully Local (On‑Premise)

Air‑gapped on your servers

The complete LLM runs on your hardware—no internet, no external APIs. Maximum data sovereignty for regulated industries.

- Open‑source models (Llama, Mistral, Qwen, DeepSeek etc.)

- GPU operation via Ollama, vLLM or TGI

- Air‑gapped deployment—100% offline possible

Real‑World Use Cases

How our customers put Generative AI to productive use.

Knowledge Assistant

AI chatbot with access to your internal documents, wikis and manuals via Retrieval‑Augmented Generation.

Document Analysis

Automatic extraction, summarization and classification of contracts, offers and reports.

Code & DevOps

A private coding assistant for developer teams—without your source code leaving your infrastructure.

Collaboration Models

Three paths to your own AI platform — we'll advise you on the best option.

Managed Service

Ideal for SMEs and startups seeking a ready-to-run solution with predictable monthly costs.

- Turnkey provisioning, branding and configuration

- Operations, updates, monitoring and backups included

- Access via Microsoft SSO, roles & corporate policies

- Transparent view of model/token usage costs

Own & Control

For companies with their own Azure contract seeking maximum control.

- Installation & configuration directly in your Azure environment

- Full cost control via your own Azure contract

- Admin training and handover of operations documentation

- Optional: maintenance, support retainers and further development

On-Premise LLM

For regulated industries & security-critical environments — fully local, optionally air-gapped.

- Local LLM (Llama, Mistral, Qwen etc.) on your hardware

- Hardware consulting & GPU sizing

- Model selection, fine-tuning and RAG on your data

- Operations without internet — air-gapped possible

- Maintenance, updates & support on request

Frequently Asked Questions

Answers to the most important questions about our AI platform.

Which deployment options are available?

We offer three deployment models: fully in Azure (Managed or Own & Control), hybrid with smart routing, and fully local on your own servers—optionally completely without internet connection (air‑gapped).

Is the platform GDPR compliant?

Yes. In all deployment scenarios your data never leaves your defined environment. Prompts are never used to train models and we sign data processing agreements (DPA) as needed.

Can we switch models later?

Yes. Using LiteLLM as a gateway keeps you flexible. You can add, swap or route requests across multiple models at any time—without changing your applications.

Ready for your own AI platform?

Let's briefly and non‑bindingly discuss which deployment model—cloud, hybrid or fully local—best fits your IT and compliance requirements.